From Agile to Agentic: Why Software Development Needs a New Discipline

Agile optimized for human coordination. When agents write most of the code, the bottleneck shifts to context, specs, and governance. The discipline that addresses this is not about tools. It is about people and process.

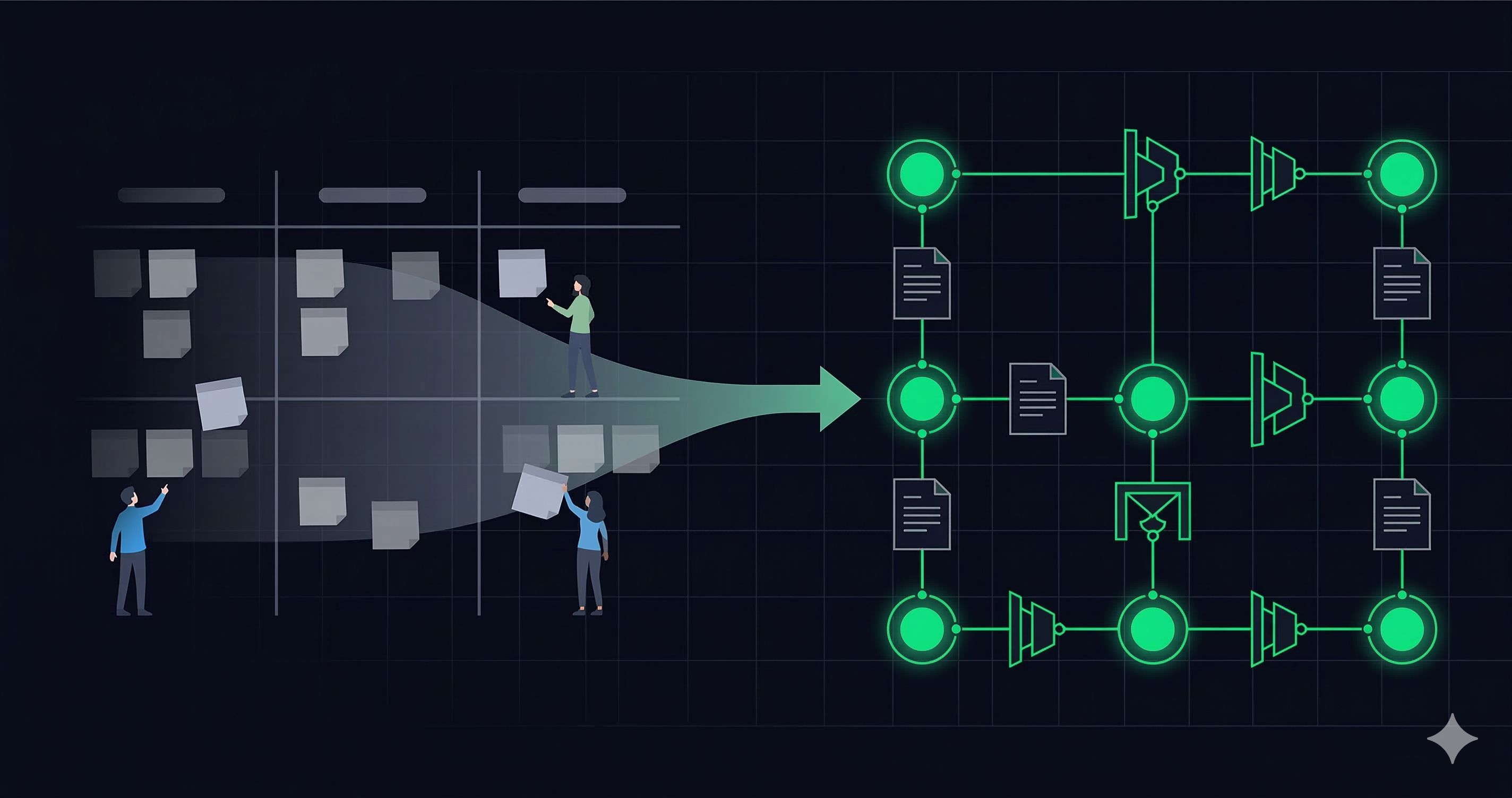

Software engineering practice is changing. When AI agents write most of the implementation, the challenge is no longer coordinating human developers. It is specifying what to build, governing what agents produce, and redesigning how teams operate. Multiple AI-native engineering frameworks have emerged to address parts of this problem, from spec-driven workflows to automated evaluation gates. But none of them fully address the organizational side: how roles evolve, how junior developers grow, what rituals should be followed, how governance scales with trust. This article proposes agentic development as a discipline that builds on these frameworks and on enterprise software development experience to address the full picture, people and process included.

The Shift That Already Happened

Agile was built on three assumptions. First, humans write all the code1, so the bottleneck is coordination between people. Second, requirements are best expressed as narratives, specifically user stories that capture intent and leave implementation to the developer2. Third, quality is assured through peer review, where another human reads the code before it ships3.

All three assumptions held for twenty years. None of them hold now.

According to Anthropic's 2026 Agentic Coding Trends Report, developers already use AI in 60% of their work. Agent-generated code is not a future scenario. It is the current operating condition. And when agents write most of the implementation, the mechanics of building software change in ways that Agile was never designed to handle.

Consider what happens when a team tries to run sprints with agent-generated output. Sprint velocity becomes meaningless because an agent completes a task in minutes, not days, so velocity metrics measure nothing useful. Story points collapse because the effort is no longer in writing code but in specifying what the code should do and validating that it did it correctly. Standups become status meetings about agent output rather than coordination sessions between people doing the work. Code review does not scale. When agents produce ten times the volume of code, expecting humans to review every line is not diligence. It is a bottleneck.

This is not an attack on Agile. Agile solved the right problem for its time: how to coordinate human developers building software iteratively. The problem is that the operating conditions changed. The unit of work is no longer a human task estimated in story points. It is an agent-executable specification validated by automated gates. That shift requires a different discipline.

What Agentic Development Actually Is

Agentic development is not a framework. It is not a toolchain. It is not a vendor methodology.

It is a discipline focused on people and process: the sociotechnical changes required when AI agents become first-class participants in software development. How roles evolve. How teams organize. How trust is earned incrementally. How governance scales without becoming a bottleneck.

The tools matter, but they are implementations. Whether a team uses BMAD, Spec Kit, Kiro, or a homegrown approach, the people-and-process questions are the same: Who owns architecture when agents write the code? What artifacts does the team produce and maintain? How does a junior developer grow when the tasks they used to learn from are now automated? How does an organization move from full human oversight to selective oversight without losing control?

This distinction matters because the adjacent terms (vibe coding, agentic engineering, agentic coding) each describe something narrower.

Vibe coding is development without discipline. A developer describes what they want in natural language, the AI generates code, and the developer accepts it with minimal review. It works for prototypes and throwaway scripts. It is explicitly not agentic development. They are incompatible paradigms, not points on a spectrum, because vibe coding abandons the governance and specification rigor that agentic development requires.

Agentic engineering, as Addy Osmani and others describe it, is an individual practice: human oversight plus AI implementation. It is valuable, but it addresses only the developer's workflow, not the team, the organization, or the governance model.

Agentic coding is tool-centric: how to configure and use AI coding agents effectively. Important, but not sufficient.

Agentic development is the discipline that sits above all of these. It addresses people and organizations, not just technology. It is framework-agnostic by design, opinionated about principles and flexible about implementations.

Startup vs Enterprise: Same Tools, Different Discipline

A solo founder building with Claude Code and a 200-person engineering organization adopting Kiro both use AI agents to write code. But the people-and-process challenges they face are fundamentally different, and conflating them is one of the most common mistakes in this space.

In a startup or small team, the discipline is lightweight. A single developer can hold the full context of the system in their head. Specifications might be a CLAUDE.md file and a few well-structured prompts. Governance is implicit because the founder reviews agent output directly as the only decision-maker. The feedback loop is tight: specify, generate, review, ship. The bottleneck is not coordination or governance. It is context quality, whether the agent has enough information to produce correct output. A startup does not need a Hybrid Squad or a Flow Manager. It needs good specs and fast iteration.

In a complex enterprise, every one of those assumptions breaks. No single person holds the full context. Multiple teams interact with the same codebase, each with different domain knowledge. Specifications must be precise enough for agents to execute without access to the senior engineer who "just knows" how the billing module works. Governance cannot be implicit. When 50 agents are producing code across 12 teams, someone needs to decide which output gets deployed, how architectural consistency is maintained, and what happens when an agent produces something that passes all tests but violates an undocumented business rule.

This is where the people-and-process questions become unavoidable:

- Roles change. The Context Architect (someone who curates and structures the information agents consume) becomes as critical as the senior engineer. The Agent Operator (someone who monitors, tunes, and intervenes in agent workflows) is a role that did not exist two years ago.

- Team structures change. A Hybrid Squad is not a traditional Scrum team with an AI add-on. It is a different composition: fewer developers, more context and evaluation specialists, with agents as first-class team members whose output is governed, not just consumed.

- Governance must be structural. You cannot rely on a policy that says "review all agent output" when the volume makes that impossible. You need automated gates: deterministic checks that run before any probabilistic judgment, evaluation harnesses that validate output against golden samples, and clear escalation paths for when agents get stuck.

- The junior talent pipeline breaks. If agents absorb the tasks that junior developers used to learn from (bug fixes, small features, boilerplate code), then the organization must intentionally redesign how juniors build expertise. This is not a side effect to manage later. It is a structural problem to solve now.

The discipline of agentic development must be flexible enough to serve both contexts. A startup needs the principles without the overhead. An enterprise needs the principles operationalized into roles, ceremonies, and governance structures. The principles are the same. The implementation scales with organizational complexity.

The Landscape: Many Frameworks, One Missing Layer

The agentic development landscape in 2026 is not short on frameworks. BMAD offers 12 specialized agent personas. Spec Kit provides a six-phase workflow from constitution to implementation. Kiro integrates spec-driven development into an IDE. The Agentic AI Foundation launched under the Linux Foundation to standardize protocols and interoperability.

Every serious framework converges on three technical principles. First, specifications over prompts: structured, machine-readable specifications produce better agent output than conversational prompting. Second, context is the constraint. Agent output quality is proportional to context quality, not model capability. A mediocre model with excellent context outperforms a frontier model with vague instructions. Third, automated governance: human code review does not scale with agent throughput, so automated evaluation gates are required.

These are valuable contributions. Each framework solves a real piece of the puzzle: spec authoring, agent orchestration, governance automation, protocol standardization.

But there is a layer that none of them fully address: the human side.

Who owns architecture when agents write the code? How do teams reorganize when the ratio of human developers to agent-executed tasks shifts from 1:1 to 1:10? How do you grow junior talent when entry-level tasks are automated? How does governance scale with trust, moving from full oversight to selective oversight as the organization demonstrates reliability at each level?

Birgitta Böckeler's experiments with Spec-Driven Development, published on Martin Fowler's blog, revealed that even with detailed specifications, agents generated unrequested features and claimed success on failed builds. The specs were correct. The governance was missing. Tools alone do not solve the problem. The discipline around the tools is what makes them work in real organizations.

Agentic development as a discipline sits above any specific framework. It is the layer that addresses people and process, the questions that cut across every tool, every vendor, every methodology.

Draft Principles for Agentic Development

In February 2001, seventeen software practitioners met at a ski lodge in Snowbird, Utah. They disagreed on almost everything except one conviction: the heavyweight, document-driven processes dominating software development were failing. They produced the Agile Manifesto: four values and twelve principles that reshaped the industry for twenty years.

The operating conditions that made Agile necessary have shifted again. What follows is a draft, not a manifesto, not a standard, but a starting point for a community conversation. These principles are opinionated about people and process, deliberately agnostic about tools and frameworks.

Context over capability. The quality of agent output depends on input quality, not model intelligence. Fix the context, not the model. A team that invests in context architecture (structured, curated, versioned information that agents consume) will outperform a team chasing the latest model release. In practice, this means treating context as a first-class engineering artifact: a Context Index that is maintained, tested, and governed like code.

Specs over prompts. Structured specifications, not conversational instructions. A Live Spec is a machine-readable document that defines what the agent should build, what constraints it must respect, and how the output will be validated. It is not a user story. It is not a prompt. It is a durable artifact that survives the implementation cycle and becomes the source of truth. The Spec-to-Code Ratio (how much specification exists relative to generated code) is a better health metric than lines of code or velocity.

Governance by structure, not policy. Deterministic gates before probabilistic judgment. A policy that says "review all agent output" fails the moment volume exceeds human capacity. Governance must be structural: automated checks that run on every output, evaluation harnesses that compare results against golden samples, and clear escalation paths when output fails validation. Trust the gate, not the memo.

Graduated autonomy. Earned trust, not binary delegation. Organizations should not choose between "human does everything" and "agent does everything." There is a progression: observe agent output → let agents suggest → let agents act with human oversight → let agents act autonomously. Each level is earned through demonstrated reliability at the previous level. Skip a level, and you lose control. Stay too long at an early level, and you lose the productivity gains.

Recovery over retry. Human intervention when agents get stuck is a feature of the system, not a failure. A Agent Recovery (a structured process for a human to take over when an agent cannot proceed) should be designed, documented, and measured. The metric is Mean Time to Unblock, not whether the agent succeeded on the first attempt.

People over automation. Redesign roles intentionally, especially the junior talent pipeline. When agents absorb entry-level implementation tasks, organizations must create new paths for junior developers to build expertise through context curation, spec writing, evaluation design, and supervised agent operation. Automation without intentional role redesign does not create efficiency. It creates a skills gap that surfaces two years later when nobody on the team understands why the system works the way it does.

These principles are a draft. They are meant to be challenged, refined, and debated by practitioners who are building with agents daily. If any of them are wrong, the community should say so and propose something better.

What We Are Building, and What Is Next

The Agentic Development initiative is a framework-agnostic knowledge base built by practitioners. It is not a competing framework. It is a shared vocabulary and a set of people-and-process practices that apply regardless of which tools or spec-driven methodology a team adopts.

The knowledge base includes a handbook covering the foundational concepts: context architecture, spec-driven development, orchestration patterns, and governance models. It includes patterns, reusable approaches like Agent Recovery workflows, gate-based governance, and context-first architecture. It includes a glossary that defines the emerging vocabulary (from Ephemeral Workbench to Triangular Workflow to Continuous Development Loop) so teams can communicate precisely. And it includes playbooks, step-by-step guides for adopting practices like Hybrid Squad setup and eval harness implementation.

The content is available on agentic-dev.org. Practitioners can join the community for free to contribute content, share implementation experience, and access members-only material. The discipline is bigger than any single tool, and the knowledge base should reflect that.

What is next: more patterns grounded in real implementation experience. More playbooks for the organizational transitions: how to restructure teams, how to redesign the junior developer path, how to introduce graduated autonomy without losing governance. And, critically, more practitioners willing to join the conversation. The Agile Manifesto was written by seventeen people who disagreed on specifics but agreed on principles. This initiative needs the same: practitioners who are willing to challenge, refine, and improve what we have started.

The tools will keep evolving. The models will keep improving. The frameworks will keep multiplying. But the people-and-process questions (how teams organize, how roles evolve, how governance scales, how trust is built) are the questions that determine whether any of it works.

That is what agentic development is about. Not the tools. The discipline.

Footnotes

-

This was an implicit assumption, never stated explicitly because no alternative existed in 2001. The Manifesto's values ("individuals and interactions," self-organizing teams, face-to-face conversation) all presuppose human implementation. ↩

-

User stories originate from Extreme Programming (Kent Beck, 1999) and were popularized by Mike Cohn's User Stories Applied (2004). They are not a Manifesto artifact but the dominant requirements format adopted by Agile teams. The Manifesto's preference for "working software over comprehensive documentation" created the space for lightweight formats like stories. ↩

-

Code review and pair programming are XP practices, not Manifesto principles. The Manifesto calls for "continuous attention to technical excellence." Peer review became the most common way teams operationalized this. ↩